Real-Time Machine Vision at the Edge : From Detection to Action

Featured Blogs

Real-Time Machine Vision at the Edge : From Detection to Action

Imagine a busy manufacturing floor where heavy machinery operates alongside human workers. Suddenly, a worker slips near an active robotic arm. In a traditional setup, the overhead camera captures the video, sends it to a remote server, and waits for a response. That round trip can take ~800 milliseconds. By the time the stop signal arrives, the damage is already done. Now, imagine the camera as the brain itself. The camera itself detects the fall and cuts power in just 10 milliseconds - without relying on the internet. That’s not just faster processing, That is a real-time reflex. This is the power of Edge Machine Vision.

Categories

- All

- Embedded OTA Solutions

- Low-Power Embedded Systems

- Cellular IoT

- IoT Dashboard Solutions

- Machine Vision

- Healthcare IoT

- Smart Mining

- IoT-powered smart parking

- Clean Tech & Sustainability

- Predictive Maintenance

- LoRaWAN Security

- Automotive

- IoT Device Management

- SecureIoT

- SecureByDesign

- IoT Technology / Smart Systems

- Wearable Technology

Real-Time Machine Vision at the Edge : From Detection to Action

What is Machine Vision with Edge Computing?

Machine Vision (MV) enables systems to interpret visual data. But technically, machines don’t “see” objects-they process structured numerical grids where each pixel represents light intensity and color.

Traditionally, these grids were transmitted to the cloud for analysis. Edge Computing changes this completely.

The processing happens locally-on devices like Radxa Rock 5B+, NVIDIA Jetson Nano, or Google Coral. This creates a self-contained, closed-loop system where sensing and decision-making happen in the same place.

"By moving the brain to the eye, we’ve transformed the camera from a mere recorder into an independent thinker."

The Reality of “Real-Time” Systems

“Real-time” is often misunderstood. It’s not just about how fast a model runs-it’s about total pipeline latency.

In a typical pipeline:

The real bottlenecks are:

- Video decoding

- Frame resizing and pre-processing

- Data movement between components

On edge devices, these inefficiencies show up immediately. There’s no room for poor optimization.

Real-Time Human Pose Analysis & Classification

To put this into practice, we developed a system for : Real-Time Human Pose Analysis, Estimation & Classification - fully on edge device.

Problem Statement: The goal was simple but challenging:

- Detect humans

- Estimate skeletal structure

- Classify behavior (Sitting, Standing, Walking, Running, Sleeping, Falling)

- All in real-time, without cloud dependency

The Live Workflow: How It Works

The system does not rely on recorded videos or uploaded images; it processes a live, direct stream from the webcam through a high-performance pipeline:

- Live Ingestion & Pre-processing: The pipeline ingests the raw webcam feed via OpenCV. To preserve compute resources, the resolution is aggressively downscaled to a 640 /480 grid.

- Skeleton Estimation: The system employs the YOLOv8n-pose nano-architecture to extract 17 distinct anatomical landmarks (eyes, shoulders, elbows, knees, etc.) from the live frame.

- Coordinate Normalization (The "Secret Sauce"): Because a person’s distance from the camera affects raw pixel coordinates, the system mathematically translates the points. By making the center of the person’s bounding box the origin (0,0) and scaling joint distances relative to the box size, the system achieves spatial invariance. This ensures the classification logic remains accurate regardless of depth.

- Classification: These normalized vectors were fed into a custom-trained Artificial Neural Network (ANN) and Classification logic to predict the action (e.g., "Falling").

Training & Implementation Process

The development process began with a custom Python script designed to scrape diverse human pose images from Bing.

These images were processed through the YOLO model to extract ground-truth skeletal landmarks, which were then saved into structured CSV coordinate files. This data formed the foundation for training the secondary ANN classifier.

Optimization Decision: CPU over NPU

Although the RK3588 includes an NPU, the system runs on the CPU by design.

Why?

Most NPU pipelines (e.g., RKNN) output only raw key points - without supporting real-time skeletal visualization. For this project, visual feedback mattered.

Running on CPU allowed:

- Custom rendering of skeleton connections via OpenCV

- Greater flexibility in processing logic

Final Outcome

The system achieves:

- 20+ FPS processing throughput

- Smooth live video feed

- Real-time skeletal overlay

- Instant behavioral classification

Security (Airports & Public Spaces)

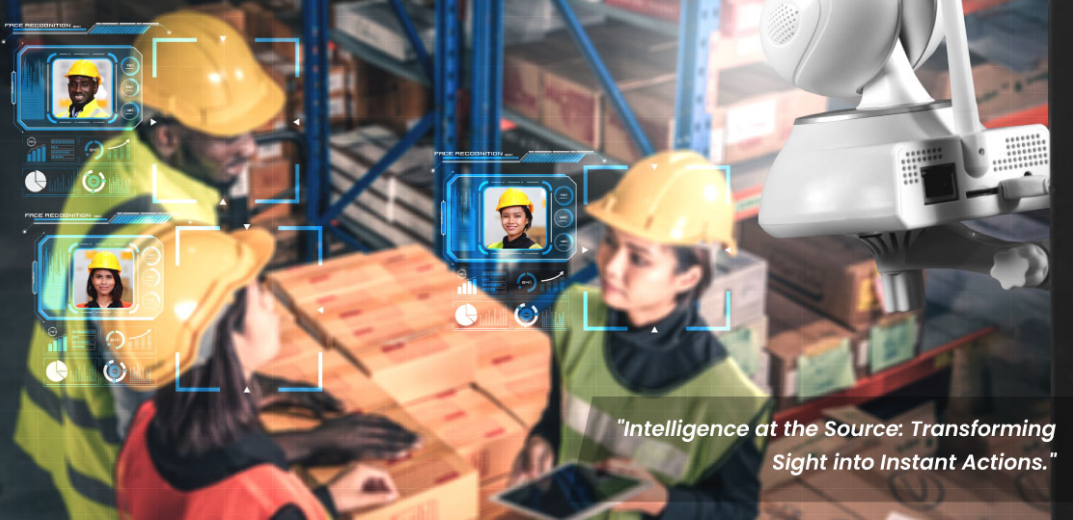

In high-security environments like airports, edge-based vision systems enable intelligent identity verification and real-time monitoring of individuals. From detecting unauthorized access to identifying persons of interest, the system processes visual data instantly at the device level.

By eliminating dependency on external networks, responses are faster and more reliable, while sensitive surveillance data remains securely stored on-site. This ensures both operational efficiency and strict data privacy in critical public infrastructure.

Why Edge Computing Wins for Machine Vision?

- Decisions at the Speed of Sight Processing data locally enables sub-millisecond reactions by eliminating server round-trips. This speed is essential for safety-critical tasks like autonomous obstacle avoidance.

- Absolute Privacy Sensitive video feeds never leave the device, keeping visual data off the public internet. This localized approach guarantees data security and complies with strict privacy laws.

- Intelligence Without the Heavy Lifting Devices process 4K streams locally and only transmit tiny text-based metadata results. This prevents network congestion and slashes the high costs of cloud data transfer.

- Uninterrupted Reliability Edge systems operate perfectly without an internet connection, ensuring 24/7 uptime for monitoring. This makes them ideal for remote industrial sites with unstable connectivity.

- Intelligence That Grows With You Using on-device NPUs avoids expensive, recurring cloud processing fees for every new camera added. This allows for massive scaling of vision networks at a fixed hardware cost.

"Giving machines the power to not just see, but to perceive and act-locally, independently, and reacting at the speed of thought."

The Future: The Next Frontier of Edge AI

Building for the Edge-First Future; The shift from cloud to edge is already happening.

The question is-are your systems ready for it?

At Dotcom IoT, we focus on engineering systems where intelligence doesn’t depend on connectivity. From selecting the right hardware platforms to optimizing full vision pipelines, the goal is simple: enable faster decisions, stronger reliability, and real-world responsiveness.

As edge capabilities continue to evolve, building systems that can sense, process, and act locally will no longer be an advantage-it will be the baseline.

“At its core, intelligence is less about processing more, and more about acting faster.”

Recent Post

Get In Touch With Us

Are You Ready To Grow Your Business With Us?

Drop us a message

- Dotcom IoT : Headquarter

FC 3011, F Tower, Central Wing, Next to ICICI Bank, Bharat Diamond Bourse, G Block, Bandra Kurla Complex, Bandra East, Mumbai 400051, India - Dotcom IoT : R&D Centre

410/4th Floor, Sunshine Commercial Complex, Hans Society, Mota Varachha, Surat, Gujarat 394101. USA

20W 47th ST, Suite#1501-A New York, NY 10036-3735.South Korea

369, Sangdo-Ro, Venture Center, Soongsil Univ., Dongjak-Gu Seoul, South Korea.- +91 85919 00346

- sales@dotcom.co.in

Tag:

#Edge Computing##EdgeAI##Machine VisionShare:

Saurabh Mishra is an AI/ML Developer specializing in bringing intelligence to the source at Dotcom IoT. His work focuses on creating "reflex-driven" systems that prioritize local processing, ultra-low latency, and absolute data privacy.